Zeroth-Order Stackelberg Control in Combinatorial Congestion Games

Spotlight Track poster presentation at CPAL 2026

Presented by Saeed Masiha & Sepehr Elahi at CPAL 2026.

Accepted to the Spotlight Track of the Conference on Parsimony and Learning (CPAL) 2026, held March 23-26 in Tuebingen.

| Poster session | Poster time | Poster location | Paper PDF | Poster |

|---|---|---|---|---|

| Poster Session I | Tue 24 March 4:00 - 6:00 p.m. CET | MPI Lecture Hall, Max-Planck-Ring 4, Tuebingen | Link | Link |

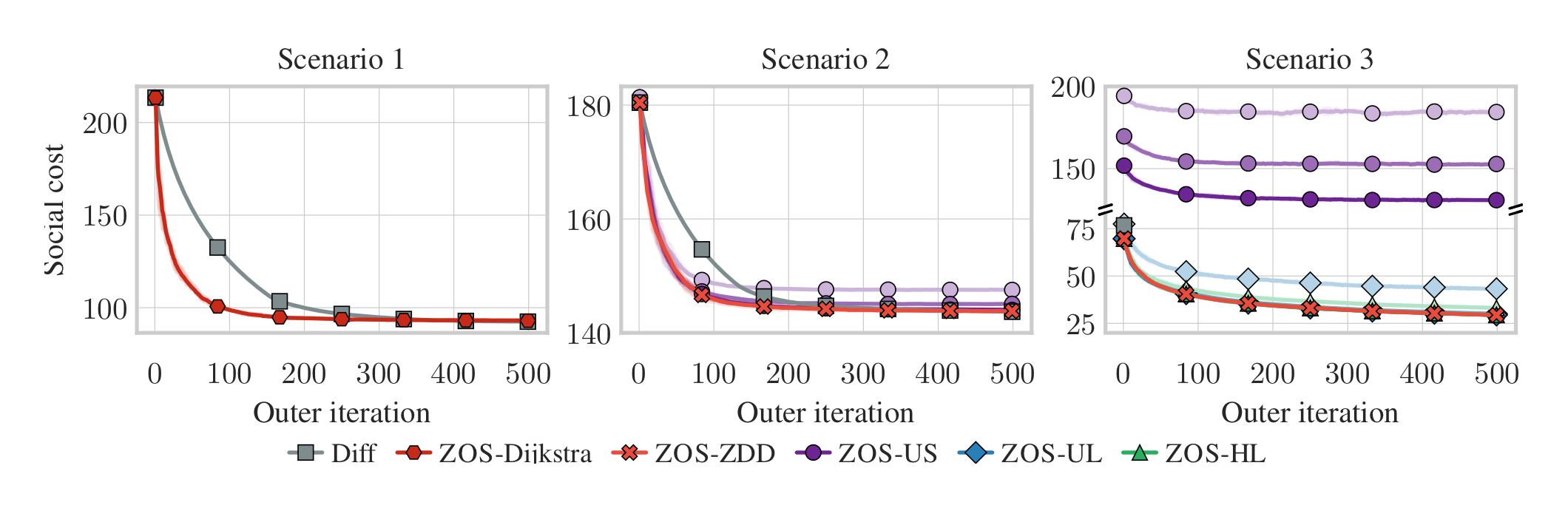

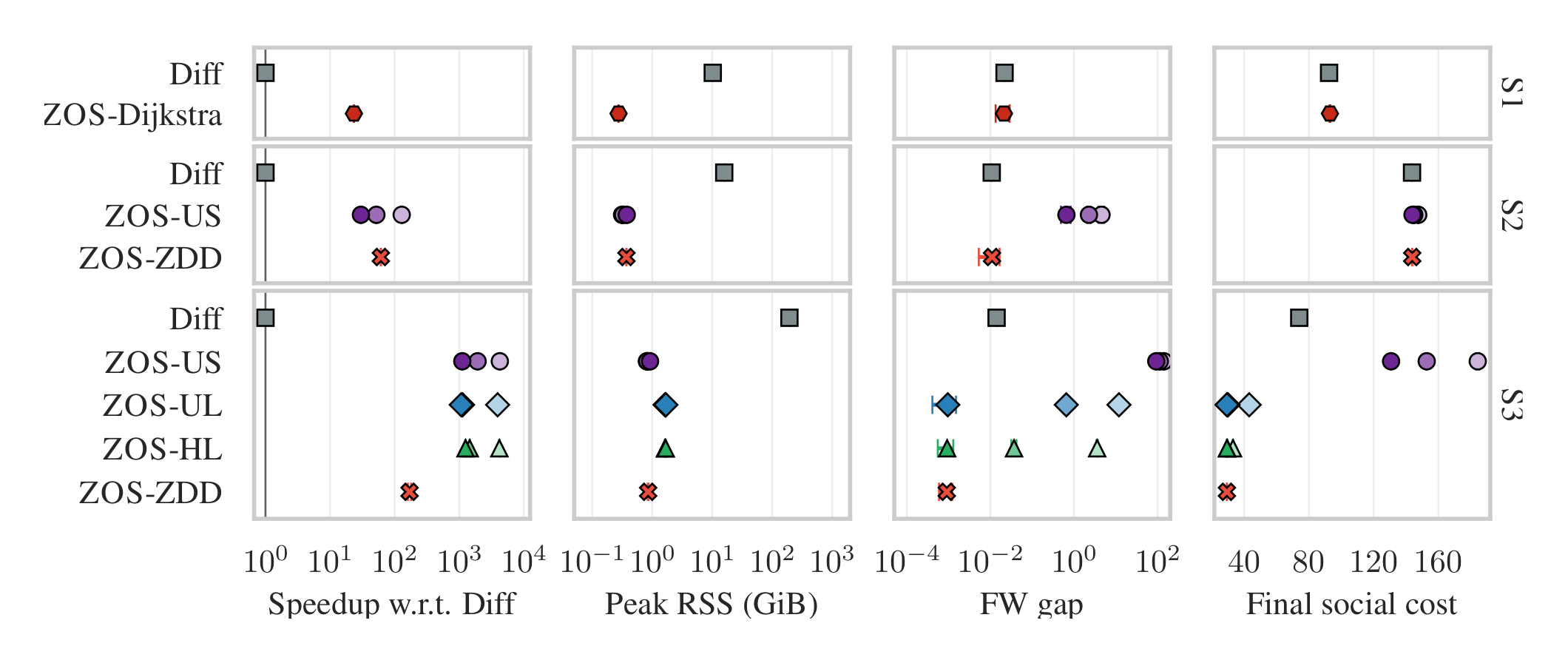

TL;DR: We study Stackelberg control in combinatorial congestion games and propose ZO-Stackelberg, a method that avoids differentiating through nonsmooth equilibria by combining a Frank-Wolfe inner solver with zeroth-order outer updates. On real transportation networks, it matches solution quality while being much faster and more memory efficient than a differentiation-based baseline.

Summary: When a leader tunes tolls, capacities, or incentives in a network, the followers choose discrete routes or other combinatorial strategies and then settle at Wardrop equilibrium. Small changes in the leader parameters can switch which strategies are active, so the true objective becomes nonsmooth and difficult to optimize by backpropagating through the equilibrium computation. We instead keep the equilibrium solver as a black box, use exact or subsampled combinatorial oracles inside a Frank-Wolfe method, and update the leader with zeroth-order queries of the true objective. This yields one framework that works across shortest-path, exact ZDD, and sampled ZDD regimes. On TNTP networks from Winnipeg, Chicago, and Philadelphia, the method achieves competitive social cost and small equilibrium gaps, with typical runtime improvements of roughly 20x-1000x per outer iteration and much lower memory use.